I have this month already blogged about good ways to use the Actual Number of Rows and Estimated Number of Rows properties, about the confusing representation on operators that execute more than once, and about the confusing choice to render arrow width based on the Number of Rows Read when it is available.

Today I’ll show yet another case where execution plans can mislead you. Not as common as the previous two situations, but with the potential to cause heavy panic when you first encounter it.

Misleading estimates caused by missing nodes

The issue I will talk about today is caused when a node is missing, due to a post-optimization rewrite. I myself have so far only seen this in execution plans where a bitmap filter (created by a Bitmap operator, by a Batch Hash Table Build operator, or by a Hash Match operator with a BitmapCreator property) was pushed into an Index Scan, Clustered Index Scan, or Columnstore Index Scan operator. So that’s what I’ll focus on here. But do keep in mind that there may be other situations where a post-optimization rewrite removes a node.

To understand the root cause of this (and, credit where credit is due, I only understood this myself after getting it explained by Paul White, whose explanation I am now paraphrasing here), we need to dig deeper into the internals of the optimizer.

Typically, the optimizer starts with an execution plan that closely matches the query. It then applies various transformation rules, each changing details and order of the operations; and assesses how all those changes would affect the estimated cost. This repeats until one of several conditions to stop the process is met; at which time the optimizer picks the version of the execution plan with the lowest estimated cost and returns that as the execution plan for the query.

One of the many transformations used during this optimization phase is to push the Filter operators used to process predicates deeper in the plan tree. For single-table predicates, this usually causes the Filter operator to end up as a Predicate property in a Scan or Seek operator, the most extreme and most efficient form of pushing down the predicate. And while this is possible for both “normal” single-table predicates as well as for bitmap-based predicates, the optimizer is NOT able to push the latter completely into the scan or seek. So if we were to be able to see the raw output of the optimizer represented as a graphical execution plan, then a branch that uses a bitmap filter would look like the (manually edited) picture below:

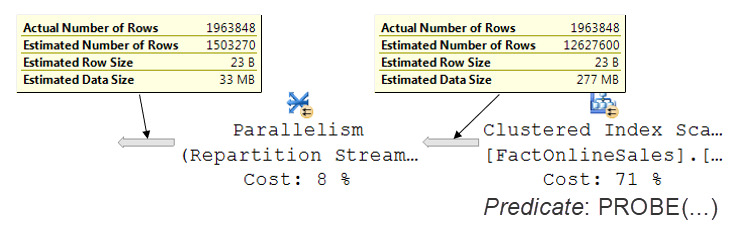

That’s not what we actually see, though. In the execution plan we do get in a case such as this, the Filter operator is not present, and the bitmap verification is pushed down into the Clustered Index Scan. The optimizer itself may not be able to do this, but SQL Server does support bitmap filtering in scan operators as a way to get even better performance. In order to ensure that this is done where possible, the raw output of the optimizer is not used directly, but first checked by a process that Paul White calls a “post-optimization rewrite”. (And who am I to disagree with Paul?) This rewrite checks the execution plan, looking for various patterns it can optimize. This is one of them. When the post-optimization rewrite recognizes a bitmap filter just to the left of a scan operator, it adds the corresponding Predicate property to the scan and removes the Filter operator. However, it will not change any other properties. And this means that the Estimated Number of Rows property of the Clustered Index Scan now no longer reflects the actual output of the operator, as shown in the picture below:

This result of the post-optimization rewrite is confusing and misleading in two ways. First, the one that most people will trip over, the huge difference between Actual Number of Rows and Estimated Number of Rows coming out of the Clustered Index Scan can easily cause you to suspect a huge cardinality estimation error where there is none. And second, one that most people will probably overlook: just looking at the Estimated Number of Rows properties of all operators, the reported data suggests that the optimizer expects the Parallelism to somehow return far less rows then it receives, which is of course not the case.

The best way to identify when a post-optimization rewrite has removed an operator is to look at the Node ID property of each operator. This property holds, for each operator, a unique value that can be used to identify the node. These numbers are assigned by the optimizer, and the operators (nodes) are always numbered sequentially, left to right and top to bottom. The post-optimization rewrite does not change them. And that makes these numbers a great tool to identify where operators have been removed after the optimization. In the execution plan above, there will be a gap of 1 between the Node ID of the Parallelism operator and that of the Clustered Index Scan operator. That missing number was the Node ID of the Filter operator, that was removed by the post-optimization rewrite.

As mentioned before, the above scenario is the case I have encountered of a missing operator due to the post-optimization rewrite. There may be other scenarios that can cause a similar confusing situation. So I suggest that you should be aware that this might happen if you see a bitmap filter pushed into a scan or seek operator, but that you should also be aware that operators might be missing in other cases too, and that in those cases, properties such as the Estimated and Actual Number of Rows could be misleading as well. You can always check the Node ID properties of all operators in an execution plan to see if any operators have been removed.

Of course, it would be much easier if the post-optimization rewrite would be a bit smarter about what properties to affect. When pushing a Predicate property into an operator, it should of course affect the Estimated Number of Rows property of that operator to correctly reflect the rewritten version of the execution plan. The property may at this stage not be needed for optimization purposes anymore, but DBAs and developers will still look at it.

Please vote for my suggestion if you would like Microsoft to invest resources in improving this situation.

1 Comment. Leave new

[…] Hugo Kornelis points out another issue when checking arrow width when reading execution plans: […]