Back in hospital

5 Comments

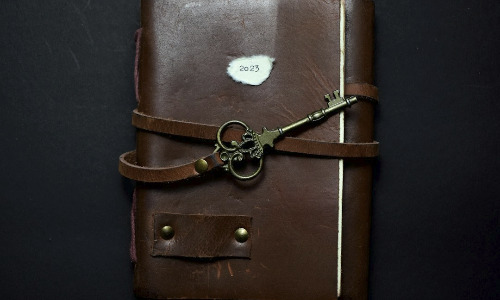

(Warning: This blog post is non-technical but purely personal. I write openly about my current health issues. This blog might be triggering for people who struggle with, or lost dear ones to, cancer and other similar diseases.) Time for an update in my leukemia diary. I had planned to write as soon as I was admitted to the hospital. I changed that plan a bit, for reasons I’ll get into later. But I am in hospital now, for a few days already. Awkward communication The last thing I had heard from my hematologist when I wrote the previous blog was…

Read More